Neurofeedback

Purpose

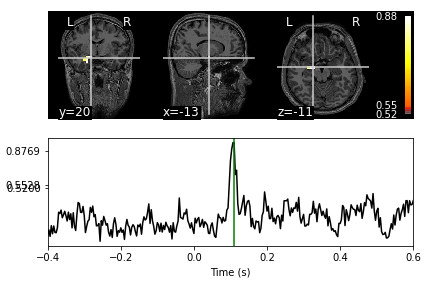

Create real-time feedback based on Amygdala responses. An LCMV adaptive beamformer will be used to localize the subcortical amygdala responses. The activity from the amygdala will be localized in real time and sent to the stimulus computer to deliver adaptive stimuli.

The beamformer will be trained on data known to evoke emotional responses. The subjects will participate in a hariri-hammer emotional faces task to "train" the beamformer. The beamformer weights from the hariri-hammer task will then be applied to the real-time data.

Data

datalad clone git@tako:/home/git/neurofeedback_proj cd neurofeedback_proj datalad get * Data/code setup in semi-"YODA" format. Code/Data/Results

Progress

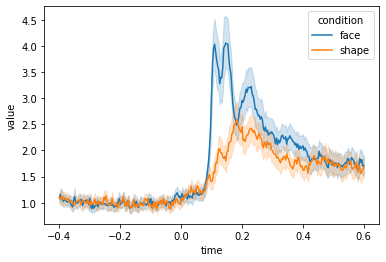

--/--/-- - Calculated pre/post hariri beamformer weights and projected the data to the freesrufer aseg.mgz amygdala volumetric sources (outputs in results/stcs) 04/09/21 - Calculated resting state 2second epoch data covariance and 2 second epoch emptyroom noise covariance weights and projected the hariri evoked faces data through (outputs in results/stcs_hariri_rest) 04/16/21 - Common filter pre/post stimulus for shapes/faces covariance. Data normalized by prestimulus average.